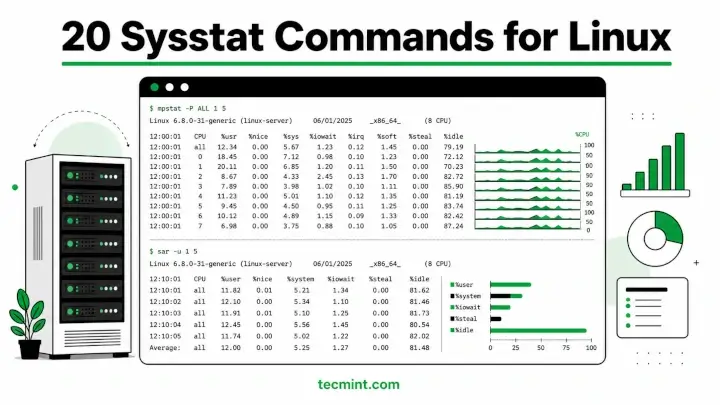

Sysstat is a collection of performance monitoring utilities for Linux that includes mpstat, pidstat, iostat, and sar, and together they give you a real-time and historical view of everything your system is doing.

Most sysadmins start with top command when something looks wrong, such as high CPU usage, system lag, or load spikes. It provides a quick snapshot, but it also has its own limitation, for example it only shows the current state and does not explain what is causing the problem over time.

In real Linux performance issues, CPU is rarely the only factor, but bottlenecks usually come from multiple system areas working together:

- CPU scheduling pressure, such as run queue contention or uneven core usage.

- I/O wait, where processes are blocked on disk or network operations.

- Memory pressure, including swapping, reclaim activity, or cache eviction.

- Interrupt handling, including uneven distribution of hardware interrupts across CPUs.

This is where sysstat becomes useful, as it provides structured and historical visibility instead of a single point-in-time snapshot.

Key tools in the sysstat suite:

mpstatshows CPU usage per core, which is helpful to identify imbalance, high softirq load, or saturation on specific CPUs.pidstattracks per-process CPU, memory, and I/O usage over time, which is useful for finding processes that cause performance issues.sarcollects system-wide historical metric, which helps correlate CPU, memory, load, and I/O behavior across time intervals instead of guessing from one moment.

These tools work consistently across modern Linux distributions, including Ubuntu and RHEL, as long as sysstat version 12.x or later is installed.

For disk-level analysis such as throughput, latency, and queue depth, iostat is used alongside vmstat as part of a deeper performance troubleshooting workflow.

Install Sysstat in Linux

Sysstat doesn’t ship by default on most distros, so before running any of the commands below, confirm it’s installed and check the version, because some flags like %wait in pidstat and %ifutil in sar were added in later releases and won’t work on older packages.

To install Sysstat on Linux, use the following appropriate command for your specific Linux distribution.

sudo apt install sysstat [On Debian, Ubuntu and Mint] sudo dnf install sysstat [On RHEL/CentOS/Fedora and Rocky/AlmaLinux] sudo apk add sysstat [On Alpine Linux] sudo pacman -S sysstat [On Arch Linux] sudo zypper install sysstat [On OpenSUSE] sudo pkg install sysstat [On FreeBSD]

Verify the install with:

mpstat -V

Output:

sysstat version 12.6.1 (C) Sebastien Godard (sysstat orange.fr)

If you see command not found, the install didn’t complete or /usr/bin isn’t in your PATH, so run which mpstat to confirm the binary exists. On Ubuntu you also need to enable data collection by setting ENABLED="true" in /etc/default/sysstat, which is off by default.

mpstat: Monitoring Per-CPU Usage in Linux

mpstat reports CPU usage statistics across all processors or for individual cores, and on any multi-core system it’s far more useful than the single summary line in top, because you can immediately see whether load is spread evenly or pinned to one CPU.

1. Display Global CPU Statistics

Running mpstat without any options prints a single snapshot of average CPU usage across all processors since boot, which gives you a baseline before drilling into per-core or per-process numbers.

mpstat

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:22:10 CPU %usr %nice %sys %iowait %irq %soft %steal %guest %gnice %idle 14:22:10 all 18.43 0.02 3.11 1.24 0.00 0.09 0.00 0.00 0.00 77.11

The %iowait column is the first one to check, because anything consistently above 10 to 15% means the system is blocked waiting on disk reads or writes, and throwing more CPU at it won’t help. The %soft column tracks time spent in software interrupt handlers, and a spike there usually points to a saturated network interface rather than a compute problem.

2. Show Statistics for Every Individual CPU

The -P ALL flag breaks the all summary row into a separate line per CPU, which immediately reveals whether one core is doing all the work while the rest sit idle.

mpstat -P ALL

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:23:05 CPU %usr %nice %sys %iowait %irq %soft %steal %guest %gnice %idle 14:23:05 all 18.51 0.02 3.14 1.26 0.00 0.10 0.00 0.00 0.00 76.97 14:23:05 0 72.14 0.00 5.22 0.00 0.00 0.00 0.00 0.00 0.00 22.64 14:23:05 1 14.32 0.03 2.88 0.52 0.00 0.08 0.00 0.00 0.00 82.17 14:23:05 2 10.44 0.02 2.71 1.98 0.00 0.12 0.00 0.00 0.00 84.73 14:23:05 3 8.11 0.01 1.75 3.52 0.00 0.09 0.00 0.00 0.00 86.52

The CPU 0 is at 72% user time while the other 3 are mostly idle, which is the classic signature of a single-threaded process pegging one core, and pidstat (covered below) will tell you exactly which process it is. You can also target a single CPU by number, like mpstat -P 0, which is useful on systems with many cores when you only want to watch one.

3. Monitoring Live CPU Activity Over Time

Adding an interval in seconds and a count gives you live samples refreshed continuously, which is far more useful for spotting transient load spikes than a single averaged snapshot.

mpstat -P ALL 2 5

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:25:10 CPU %usr %nice %sys %iowait %irq %soft %steal %guest %gnice %idle 14:25:12 all 21.34 0.00 2.88 0.00 0.00 0.00 0.00 0.00 0.00 75.78 14:25:12 0 74.50 0.00 2.00 0.00 0.00 0.00 0.00 0.00 0.00 23.50 14:25:12 1 15.22 0.00 3.11 0.00 0.00 0.00 0.00 0.00 0.00 81.67 14:25:12 2 11.04 0.00 2.88 0.00 0.00 0.00 0.00 0.00 0.00 86.08 14:25:12 3 9.12 0.00 1.44 0.00 0.00 0.00 0.00 0.00 0.00 89.44 ... Average: all 19.87 0.01 3.02 0.88 0.00 0.08 0.00 0.00 0.00 76.14

The Average: row at the end summarises all 5 samples, so you can tell at a glance whether CPU 0 stayed hot across the full window or just had one brief spike. The 2 5 syntax means “collect 5 readings, one every 2 seconds“, and you can drop the count to run indefinitely until you hit Ctrl+C.

4. Monitoring Interrupt Statistics Per Processor

The -I flag shows interrupt counts per second for each IRQ line across every CPU, and it’s the right tool when you’re debugging a saturated network card or storage controller that’s pinning all its interrupts to one core.

mpstat -I

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:27:19 CPU intr/s 14:27:19 all 742.18 14:27:19 CPU NET_TX/s NET_RX/s BLOCK/s SCHED/s RCU/s 14:27:19 0 0.04 1.44 9.22 12.44 41.22 14:27:19 1 0.04 2.01 8.88 11.97 44.11 14:27:19 2 0.05 1.88 9.04 12.11 42.88 14:27:19 3 0.03 1.92 8.76 11.88 43.04

The high NET_RX/s concentrated on a single CPU combined with high %soft in example 1 means your NIC is affined to one core, and running irqbalance or manually setting IRQ affinity with /proc/irq/ will spread that interrupt load across cores.

5. Display All CPU and Interrupt Statistics Together

The -A flag is equivalent to running -u -I ALL -P ALL simultaneously, giving you a complete one-shot dump of CPU utilization and interrupt counts for every processor in a single command.

mpstat -A

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:30:01 CPU %usr %nice %sys %iowait %irq %soft %steal %guest %gnice %idle 14:30:01 all 18.43 0.02 3.11 1.24 0.00 0.09 0.00 0.00 0.00 77.11 14:30:01 0 72.14 0.00 5.22 0.00 0.00 0.00 0.00 0.00 0.00 22.64 14:30:01 1 14.32 0.03 2.88 0.52 0.00 0.08 0.00 0.00 0.00 82.17 ... 14:30:01 CPU intr/s 14:30:01 all 742.18 14:30:01 0 188.14 14:30:01 1 192.44

To redirect the output to a timestamped log file with:

mpstat -A >> /var/log/mpstat-$(date +%F).log

and you have a lightweight manual performance record that doesn’t require sar to be configured, which is useful on systems where you can’t install or configure the full sysstat cron setup but still need to capture a snapshot before a maintenance window.

pidstat: Monitor Per-Process Resource Usage in Linux

The pidstat is the tool you reach for after mpstat identifies a hot CPU, because it shows exactly which process or thread is responsible for the resource consumption.

Unlike top, it gives you historical context, I/O breakdown, and thread-level visibility in a format you can pipe and parse without any extra tooling.

6. Listing All Active Processes and CPU Usage

Running pidstat without arguments displays current CPU usage for every active process, averaged since boot, and it’s the fastest way to confirm which processes are actually consuming CPU right now.

pidstat

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:32:44 UID PID %usr %system %guest %wait %CPU CPU Command 14:32:44 0 1 0.01 0.08 0.00 0.00 0.09 1 systemd 14:32:44 0 512 0.00 0.04 0.00 0.00 0.04 0 kworker/0:1H 14:32:44 1000 2841 18.44 0.88 0.00 0.00 19.32 0 python3 14:32:44 1000 3102 0.12 0.04 0.00 0.00 0.16 2 nginx

The %wait column, added in sysstat 11.5, shows time a process spent waiting to run on a CPU even though it was runnable, and sustained non-zero values here mean you have more work queued than available cores, which is CPU saturation rather than a slow process.

The CPU column tells you which core each process last ran on, which cross-references directly with the hot core you identified in example 2.

7. Viewing All Processes Including Idle Ones

The default view skips processes with zero CPU activity since boot. Adding -p ALL includes every process, including sleeping ones, which is useful when you need the full PID list for a specific application or want to confirm a service is actually running.

pidstat -p ALL

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:33:11 UID PID %usr %system %guest %wait %CPU CPU Command 14:33:11 0 1 0.01 0.08 0.00 0.00 0.09 1 systemd 14:33:11 0 2 0.00 0.00 0.00 0.00 0.00 0 kthreadd 14:33:11 0 3 0.00 0.00 0.00 0.00 0.00 0 rcu_gp 14:33:11 0 4 0.00 0.00 0.00 0.00 0.00 0 rcu_par_gp 14:33:11 1000 2841 18.44 0.88 0.00 0.00 19.32 0 python3

The kworker and rcu_* entries are kernel worker threads, not user processes, and seeing them with non-zero CPU can indicate driver issues or filesystem problems worth investigating further. If a service you expected to see is missing from this output, it’s not running at all.

8. Monitor Per-Process Disk I/O

The -d flag switches from CPU to disk I/O and shows kilobytes read and written per second for each process, which is the fastest way to identify which application is hammering your storage during a high-iowait episode.

pidstat -d 2

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:35:22 PID kB_rd/s kB_wr/s kB_ccwr/s iodelay Command 14:35:24 1221 0.00 148.00 2.00 0 rsyslogd 14:35:24 2841 412.00 88.00 4.00 12 python3 14:35:24 3210 0.00 22.00 0.00 0 postgres

The kB_ccwr/s column shows cancelled write bytes, meaning pages that were queued for disk but then invalidated before they flushed, and high values suggest a process writing and immediately overwriting the same data in a tight loop.

The iodelay column counts clock ticks the process spent blocked waiting for I/O to complete, and a non-zero value here alongside high %iowait from mpstat confirms that specific process as the I/O culprit.

9. Show Per-Thread CPU Usage

The -t flag expands a process into its individual threads, which is essential for multi-threaded applications where one thread is responsible for all the elevated CPU while the rest are idle.

pidstat -t -p 2841 2 3

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:37:08 UID TGID TID %usr %system %guest %wait %CPU CPU Command 14:37:10 1000 2841 - 18.00 0.88 0.00 0.00 18.88 0 python3 14:37:10 1000 - 2841 17.50 0.50 0.00 0.00 18.00 0 |__python3 14:37:10 1000 - 2844 0.50 0.38 0.00 0.00 0.88 1 |__ThreadPool-1 14:37:10 1000 - 2845 0.00 0.00 0.00 0.00 0.00 2 |__GC-thread

Replace 2841 with the PID of the process you want to inspect, which you can get from pgrep if you don’t already have it.

The pipe-and-underscore hierarchy shows every child thread under the main process, so it’s immediately clear that the main thread is doing nearly all the work while ThreadPool-1 and GC-thread are barely active.

10. Monitor Memory Utilization Per Process

The -r flag reports virtual size, resident set size, and page fault rates per process, and the -h flag prints in compact single-line format without repeating the header on every sample, which is easier to watch continuously.

pidstat -rh 2 3

Output:

# Time UID PID minflt/s majflt/s VSZ RSS %MEM Command 1746353842 1000 2841 3412.00 0.00 812448 312200 7.68 python3 1746353842 1000 3102 128.22 0.00 142312 44820 1.10 nginx 1746353842 0 1201 644.00 0.00 506728 316788 7.80 Xorg

The majflt/s column is the one to watch, because a major page fault means the kernel had to go to disk to retrieve a memory page from swap, and sustained non-zero values here mean the system is actively swapping and that process is causing it.

Minor faults like minflt/s are cheap, they’re just the kernel mapping pages that are already in RAM, but major faults are expensive and will degrade application response times noticeably.

11. Filter Processes by Name

The -G flag filters output to only processes whose command name matches a string, so you don’t have to scroll through the full process list to find the application you’re watching.

pidstat -G nginx

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:40:18 UID PID %usr %system %guest %wait %CPU CPU Command 14:40:18 1000 3102 0.12 0.04 0.00 0.00 0.16 2 nginx 14:40:18 1000 3103 0.08 0.02 0.00 0.00 0.10 3 nginx

Combine with -t to expand into threads by running pidstat -t -G nginx, or add an interval for continuous monitoring with pidstat -G nginx 2.

If -G returns nothing, the process name doesn’t match exactly, so check the actual command string with ps aux | grep nginx before assuming the service isn’t running.

12. Show Real-Time Scheduling Priority and Policy

The -R flag reports the scheduling policy and real-time priority for each process, and it’s worth running when a latency-sensitive application isn’t getting CPU time despite low overall system utilisation.

pidstat -R

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:41:33 UID PID prio policy Command 14:41:33 0 3 99 FIFO migration/0 14:41:33 0 5 99 FIFO migration/1 14:41:33 0 14 99 FIFO watchdog/0 14:41:33 1000 4821 20 NORMAL java

Kernel migration threads always run at priority 99 with FIFO scheduling, which is expected behaviour. If you see a user process at FIFO or RR policy with a high priority, it will preempt everything else on that CPU until it voluntarily yields, and finding it here explains why other processes are getting starved.

sar: System Activity Reporter for Historical Linux Performance

sar is the historical tool where mpstat and pidstat are point-in-time views, and it collects system activity data continuously via cron and lets you replay any time window from the past.

This means you can investigate a performance problem that happened at 3am without being awake at 3am, which is the single most valuable thing in this whole article to set up before you need it.

13. Collect CPU Statistics and Save to a Binary Log

The -u flag reports CPU utilisation, -o saves raw data to a binary file for later replay, and the 2 5 arguments collect 5 samples at 2-second intervals, which gives you a short capture window you can save and share during an incident.

sar -u -o /tmp/sarfile 2 5

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:44:10 CPU %user %nice %system %iowait %steal %idle 14:44:12 all 22.14 0.00 3.44 0.00 0.00 74.42 14:44:14 all 19.88 0.00 2.98 0.52 0.00 76.62 14:44:16 all 24.32 0.00 3.11 0.00 0.00 72.57 14:44:18 all 21.44 0.00 3.22 0.00 0.00 75.34 14:44:20 all 20.88 0.00 3.08 0.00 0.00 76.04 Average: all 21.73 0.00 3.17 0.10 0.00 75.00

To replay the saved file, run sar -u -f /tmp/sarfile, and to convert it to CSV for graphing, use sadf -d /tmp/sarfile. The binary format is not human-readable directly, so always read it back through sar -f or sadf.

14. Set Up Automatic Collection with Cron

Instead of running sar interactively, configure it to collect data in the background continuously, which gives you historical data to replay whenever you need to investigate an incident that already happened.

Add these 2 entries to root’s crontab with sudo crontab -e:

# Collect system activity every 10 minutes */10 * * * * /usr/lib/sysstat/sa1 1 1 # Generate daily human-readable report at 23:53 53 23 * * * /usr/lib/sysstat/sa2 -A

Verify data is being collected:

ls -lh /var/log/sysstat/

Output:

total 2.1M -rw-r--r-- 1 root root 412K May 3 23:53 sa03 -rw-r--r-- 1 root root 388K May 4 14:45 sa04 -rw-r--r-- 1 root root 44K May 4 14:45 sar04

On Ubuntu/Debian the sa1 path is /usr/lib/sysstat/sa1, and on RHEL/Rocky Linux it may be at /usr/lib64/sa/sa1, so confirm the correct path with which sa1 before adding the cron entry.

If the cron runs but /var/log/sysstat/ stays empty on Ubuntu, check that ENABLED="true" is set in /etc/default/sysstat, because Ubuntu ships with collection disabled by default.

15. Check Run Queue and Load Average History

The -q flag reports run queue length, total process count, load averages, and blocked process count, which together tell you whether the system has more runnable work than it can process and whether that’s a CPU saturation or I/O blocking problem.

sar -q 2 5

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:50:11 runq-sz plist-sz ldavg-1 ldavg-5 ldavg-15 blocked 14:50:13 3 512 2.44 1.88 1.42 0 14:50:15 4 514 2.51 1.90 1.43 1 14:50:17 5 516 2.60 1.91 1.43 2 14:50:19 2 514 2.48 1.90 1.43 0 14:50:21 1 512 2.41 1.89 1.43 0 Average: 3 514 2.49 1.90 1.43 1

On a 4-CPU system, a sustained runq-sz above 4 means processes are actively queuing for CPU time rather than running, which is CPU saturation.

The blocked column counts processes blocked on disk or network I/O, and a non-zero value here alongside high %iowait from sar -u confirms a storage bottleneck rather than a CPU problem.

16. Check Filesystem Usage Over Time

The -F flag reports free and used space plus inode usage for every mounted filesystem, and because it feeds into the sar historical log, you can use it to track when a filesystem started filling up rather than only knowing it’s full right now.

sar -F 2 4

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:52:01 MBfsfree MBfsused %fsused %ufsused Ifree Iused %Iused FILESYSTEM 14:52:03 18240 9760 34.84 0.00 12188422 811578 6.24 /dev/sda1 14:52:03 2048 952 31.74 0.00 1048320 176480 14.40 /dev/sdb1

The %ufsused column is the user-space percentage and accounts for the reserved blocks that root keeps for itself, so when %fsused hits 100% but %ufsused is lower, regular users can no longer write but root still can.

A filesystem that fills silently overnight is one of the most common causes of application crashes that look like software bugs until you check disk space.

17. Monitor Network Interface Throughput

The -n DEV flag reports packets and bytes received and transmitted per second for each interface, and piping through grep -v lo drops the loopback interface to keep the output focused on real traffic.

sar -n DEV 1 3 | grep -v lo

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:54:08 IFACE rxpck/s txpck/s rxkB/s txkB/s rxcmp/s txcmp/s rxmcst/s %ifutil 14:54:09 eth0 412.00 388.00 42.14 38.22 0.00 0.00 0.00 0.40 14:54:10 eth0 844.22 812.44 88.22 82.14 0.00 0.00 0.00 0.84 14:54:11 eth0 388.12 366.00 40.44 38.88 0.00 0.00 0.00 0.40

The %ifutil column, added in sysstat 11.5, shows the percentage of interface bandwidth in use, and values above 80% on a 1Gbps interface mean you’re approaching the wire limit and should investigate whether traffic shaping, bonding, or a faster NIC is needed. Combine this with sar -n EDEV to check for packet errors and drops at the same time.

18. Report Block Device I/O Latency

The -d flag shows throughput and latency per block device, and the combination of await and svctm together is the most direct indicator of whether you have a slow disk or an overloaded I/O queue.

sar -d 1 3

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:56:14 DEV tps rkB/s wkB/s areq-sz aqu-sz await svctm %util 14:56:15 dev8-0 88.00 412.00 1084.00 17.00 0.88 10.02 4.12 36.22 14:56:16 dev8-0 112.44 488.22 1244.00 15.44 1.12 12.44 4.88 44.12 14:56:17 dev8-0 76.22 344.88 922.00 16.88 0.72 8.88 3.88 29.66

The await value is total time in milliseconds from when the request was queued until it completed, and svctm is the time the device actually spent serving it, so a large gap between them means requests are spending most of their wait time in the queue rather than being limited by disk speed.

The aqu-sz column confirms this by showing the average queue depth, and values consistently above 1 on a single spinning disk indicate I/O saturation.

19. Report Memory Usage and Commit Statistics

The -r flag reports the full memory picture including free, used, cached, and committed memory, and the %commit column is the one that tells you whether the kernel has promised more memory than physically exists.

sar -r 1 3

Output:

Linux 6.8.0-45-generic (web01.tecmint.com) 05/04/2026 _x86_64_ (4 CPU) 14:58:22 kbmemfree kbmemused %memused kbbuffers kbcached kbcommit %commit kbactive kbinact kbdirty 14:58:23 2142440 5907560 73.40 288012 2844120 6112448 37.82 3188400 2241644 1224 14:58:24 2138800 5911200 73.45 288016 2844888 6114220 37.83 3190144 2241788 988 14:58:25 2136320 5913680 73.48 288020 2845200 6118400 37.86 3191220 2241900 812 Average: 2139187 5910813 73.44 288016 2844736 6114989 37.84 3189921 2241777 1008

When %commit exceeds 100%, the kernel has promised more virtual memory to running processes than it can physically back with RAM and swap combined, which means some allocations will fail with out-of-memory errors if every process simultaneously tries to use its full committed allocation.

The kbdirty column shows memory pages written but not yet flushed to disk, and a high persistent value here means the kernel is holding a lot of data in memory that would be lost on a sudden power failure.

20. Export Historical sar Data as CSV

The sadf -d reads a binary sar data file and outputs semicolon-delimited CSV, which you can open in a spreadsheet or pipe into awk for custom analysis, and it’s the best way to build performance graphs from the data sar collects automatically.

sadf -d /var/log/sysstat/sa04 -- -n DEV | grep -v lo

Output:

hostname;interval;timestamp;IFACE;rxpck/s;txpck/s;rxkB/s;txkB/s;rxcmp/s;txcmp/s;rxmcst/s;%ifutil web01;600;2026-05-04 08:00:02 UTC;eth0;288.44;241.22;29.14;24.08;0.00;0.00;0.00;0.28 web01;600;2026-05-04 08:10:02 UTC;eth0;312.88;288.44;31.82;29.04;0.00;0.00;0.00;0.32 web01;600;2026-05-04 09:00:02 UTC;eth0;1841.22;1724.88;188.44;174.12;0.00;0.00;0.00;1.84

The 09:00 row showing 1841 packets per second versus 288 at 08:00 is exactly the kind of traffic spike you’d completely miss if you were only watching live sar output, and this is why the cron setup in example 14 is worth doing before you have an incident.

Save to a file with the follwoing command and you have data ready to chart or share with your team.

sadf -d /var/log/sysstat/sa04 -- -n DEV > /tmp/network-$(date +%F).csv

If your team finally has a week of baseline data after setting this up, share this article with whoever does your incident reviews.

Conclusion

The sysstat suite works best as a diagnostic chain, and the typical flow on a sluggish system starts with sar -u to check recent CPU history, moves to mpstat -P ALL to identify hot cores, then lands on pidstat -p ALL 2 to name the process responsible, and finishes with pidstat -d or sar -d if I/O is involved.

The single most impactful thing you can do right now is set up the cron job from example 14 and let it collect a full week of baseline data before anything goes wrong, because diagnosing an incident with historical data takes minutes while doing it from scratch takes hours.

Run the following command after the first day to confirm data is flowing and the file is growing as expected.

sar -u -f /var/log/sysstat/sa$(date +%d)

What’s the most useful sar flag or pidstat option you use in production that didn’t make this list? Drop it in the comments.