Arm this week made available a free toolkit for analyzing agentic artificial intelligence (AI) workloads as they are being developed by DevOps and platform engineering teams.

Earlier this year, Arm unveiled a 3nm processor based on its Neoverse V3 architecture that is specifically designed for AI workloads. The Arm Performix toolkit provides system-wide analysis across metrics such as memory bandwidth, latency, cache efficiency and CPU utilization for workloads running on that processor. Additionally, Arm has included recipes for testing multiple classes of agentic AI workloads.

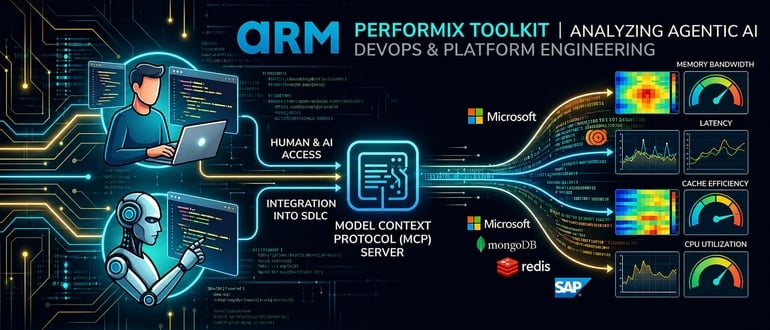

Developed in collaboration with Microsoft, MongoDB, Redis and SAP, the Arm Performix toolkit surfaces expert performance analysis that is designed to be easily integrated into an existing software development lifecycle (SDLC).

At the core of the Arm Performix toolkit is a Model Context Protocol (MCP) Server that enables either a human developer or an AI assistant to run Performix directly from tools such as GitHub Copilot, Kiro, Gemini and Codex.

Alex Spinelli, senior vice president for AI and developer platforms at Arm, said the goal is to create a tight feedback loop where performance evaluation becomes embedded within the software development lifecycle (SDLC). Regardless of whether the Arm Performix toolkit is invoked by a human developer or an AI agent, the need to be able to track metrics will prove to be crucial in the age of AI, noted Spinelli.

Mitch Ashley, vice president and practice lead for software lifecycle engineering at the Futurum Group, said performance analysis is moving into the agentic build loop, callable by the same agents that write and test the code. Arm’s Performix toolkit reflects hardware vendors reaching upstream into the SDLC, with the MCP Server form factor positioning agents and humans at the same interface, he added.

Vendors that ship developer tooling without an MCP-native interface will be invisible to the agents driving build and test workflows. Performance, security, and quality tooling are converging on the same form factor, and that convergence determines who gets called during the build, noted Ashley.

It’s still early days so far as adoption of an Arm AI processor is concerned, but Meta, OpenAI, Cloudflare, and SAP have already signaled their intent to adopt it. Arm is betting that by providing free tools for analyzing agentic workloads more software engineering teams will follow suit.

Ultimately, AI agents will prove to be yet another application that software engineering teams will build, deploy, update and manage. In some cases, those AI agents will need to run on a graphical processor unit (GPU) or AI accelerator but more often than not they will run on traditional processors. That latter approach should prove to be, after all, more cost effective as thousands of AI agents are deployed across the enterprise.

In the meantime, software engineering teams will undoubtedly be relying more on AI coding tools to build AI agents that will be deployed via DevOps pipelines. The challenge now is identifying the providers of tools and platforms that will make it as simple as possible to fulfill that mission at levels of scale that, from a historical perspective, will soon prove to be unprecedented.