SUSE today revealed it is collaborating with multiple providers of artificial intelligence (AI) agents with the ability to manage IT infrastructure resources via integrations with the Model Context Protocol (MCP) server embedded in its platforms.

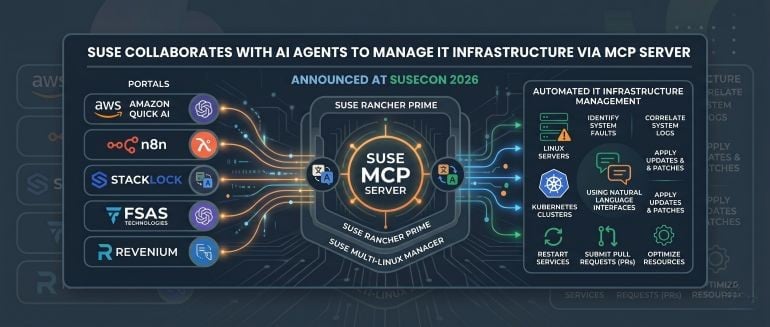

Announced at the SUSECON 2026 conference, AI agents from Fsas Technologies, n8n and Revenium, Stacklock and Amazon Web Services (AWS) can invoke the MCP server that SUSE has embedded in its Rancher Prime and SUSE Multi-Linux Manager offerings.

Rick Spencer, general manager, engineering at SUSE, said that capability makes it possible, for example, for the Amazon Quick AI agent that AWS developed to automate workflows for managing IT infrastructure resources such as Linux servers and Kubernetes clusters.

Ultimately, any AI agent that can access the SUSE MCP server should be able to, for example, identify system faults in Kubernetes clusters or Linux servers, correlate system logs, and submit a pull request (PR) or a patch to restart a service or apply updates.

The goal is to provide a secure way for AI agents to monitor, troubleshoot and optimize infrastructure across any distribution of Linux or Kubernetes using SUSE Multi-Linux Manager or the Rancher Prime management platform, said Spencer.

The degree to which organizations will enable AI agents to manage IT infrastructure will naturally vary by use case but it’s clear that with the advent of MCP, not every platform is going to require its own dedicated AI agent. In some instances, an AI agent developed by a third-party will simply invoke MCP to automate a task. In other instances, however, an AI agent might communicate with another AI agent that has been specifically trained to perform IT infrastructure tasks.

Regardless of approach, the days when IT teams relied on graphical tools to manage IT environments are coming to an end, said Spencer. Instead, IT teams will use natural language interfaces to instruct AI agents to perform specific tasks, he added.

In the meantime, MCP itself remains a work in progress. The next iteration of MCP will enable IT teams to deploy stateless servers that will make it easier to deploy AI tools and applications at higher levels of scale. The technical oversight committee for MCP, now being advanced under the auspices of the Agentic AI Foundation (AAIF), is also working on a task capability that will make it easier to run long-running autonomous workflows versus continuing to rely on a request and response mechanism that would otherwise need to be re-invoked multiple times.

Additionally, maintainers of the MCP project are working on adding a triggers capability that will enable servers to initiate an action, rather than always having to rely on an MCP client. Support for retry semantics, expiration policies, native streaming and reusable skills that are based on domain knowledge are additional capabilities that are expected to be added in 2026.

Finally, updates to the Python and TypeScript software development kits (SDKs) will provide access to more efficient MCP clients and servers.

In the meantime, DevOps teams should expect the number of AI agents that can manage IT infrastructure to rapidly proliferate in the days and months ahead.