Every team deploying AI agents in DevOps eventually faces the same design question, and it’s more consequential than it first appears: How much should the agent do on its own?

The question sounds like a settings dial — more autonomy here, less there. In practice, it is a governance question, an engineering question, and an organizational trust question bundled together.

This article gives you a framework for thinking through the autonomy decision — what factors actually determine where on the copilot-to-autopilot spectrum a specific action should sit, and how to build the guardrails that make the decision defensible.

The Spectrum Isn’t Binary

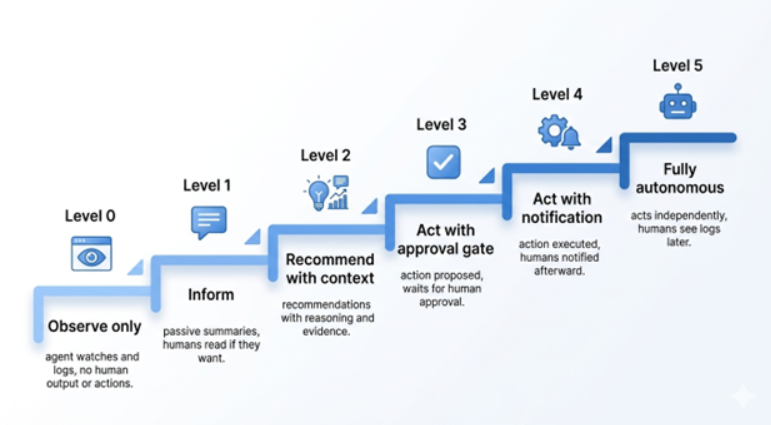

The framing of “human in the loop vs. fully autonomous” is too coarse to be useful in practice. Real DevOps agent deployments live somewhere on a more granular spectrum:

Level 0 — Observe only: The agent watches and logs. No output to humans, no actions. Used for baselining and evaluation before deployment.

Level 1 — Inform: The agent surfaces findings passively — posts a summary to a Slack channel, adds context to an existing incident thread. Humans read it if they want to.

Level 2 — Recommend with context: The agent surfaces a recommendation with explicit reasoning attached. “I recommend restarting the pods in service X because the error rate spiked 400% two minutes after the last deployment. Here’s the relevant log excerpt.” Humans decide.

Level 3 — Act with approval gate: The agent proposes a specific action and waits for explicit human approval before executing. The approval request includes the action, the reasoning, and the expected outcome.

Level 4 — Act with notification: The agent executes the action and notifies humans afterward. A human can override within a defined window.

Level 5 — Fully autonomous: The agent acts without notification. Humans see the result in logs or postmortems.

Most DevOps teams should have agents operating at Levels 1–3 for the vast majority of their use cases right now. Level 4 is appropriate for a narrow, carefully defined class of actions. Level 5 is appropriate for even fewer — and only after a documented track record at Level 4.

The Four Factors That Determine the Right Level

For any specific action your agent might take, four factors should determine where on the spectrum it sits.

- Reversibility

The single most important factor. An action that can be trivially undone — adding a label, posting a message, running a read-only query — can tolerate a higher level of autonomy than an action that can’t. A pod restart is reversible but has customer impact. A database schema migration is largely irreversible. A cache flush is reversible but might trigger cascading load. A deployment rollback is reversible but takes time.

Map your agent’s action space by reversibility before you assign autonomy levels. Any irreversible or difficult-to-reverse action should require human approval by default, regardless of the agent’s confidence.

- Blast Radius

How many users, services, or systems does this action affect? Restarting a single pod in a non-critical internal service has a small blast radius. Scaling down a fleet of pods serving your highest-traffic API has a large one. Modifying a shared ConfigMap that multiple services read has an unpredictable impact.

Blast radius interacts with reversibility: a large-blast-radius reversible action (restarting all pods in a deployment) is still higher risk than a small-blast-radius irreversible action (deleting a single test resource). Both factors matter.

-

Agent Confidence and Signal Quality

Is the agent working from clean, unambiguous signals, or is it reasoning about a noisy, ambiguous situation? An agent that’s detecting a clear threshold breach — a metric is 10x above its baseline, no deployments have happened, the same failure occurred twice before — can be more trusted than an agent that’s inferring a root cause from indirect signals.

This is hard to measure precisely, but it can be approximated. Well-defined success criteria for “high confidence” situations — specific signal patterns, specific prior incident matches, specific thresholds — let you build confidence-gated autonomy into the agent explicitly. If the confidence score is above the threshold, proceed at Level 4. If below, escalate to Level 3.

- Time Sensitivity

Some failures get significantly worse if action is delayed by the time it takes for a human to respond. A memory leak that will OOM a pod in the next 90 seconds may genuinely warrant automated intervention without waiting for approval. A misconfigured alert that’s been firing for three hours can wait for a human to decide how to respond.

Time sensitivity is the factor that most justifies moving up the autonomy scale — but it’s also the factor most often used to rationalize autonomy that isn’t actually warranted. Be honest about whether the failure actually gets worse with a 5-minute human response delay, or whether it just feels urgent.

The Approval Gate: Design It to Be Used

The biggest failure in human-in-the-loop systems is approval fatigue: too many requests, too little context, and people start approving blindly.

A good approval gate should:

- Be decision-ready: Include action, reason, expected outcome, and risk — so someone can decide in seconds.

- Have a timeout plan: If no response, define what happens (escalate, fallback, or abandon). Never stall.

- Fire at the right frequency: Too many approvals = poor design. Batch actions or automate more.

Building the Autonomy Track Record

Before increasing autonomy, prove reliability at the current level.

Track for each action:

- How often do humans approve it

- How often is the outcome correct

- Whether humans modify it before approving

- When and why it’s rejected

Use this to decide readiness:

- ~95% approval with no changes → ready for more autonomy

- ~70% approval with frequent edits → needs improvement

Measure per action type, not overall — agents can be good at some tasks and weak at others.

The Actions That Should Always Stay at Level 3

Regardless of track record, there’s a class of actions that should always require human approval. This isn’t a temporary policy while you build confidence — it’s a permanent boundary.

- Any action affecting a production database (migrations, deletions, bulk updates)

- Any action that could cause data loss

- Any action affecting security configuration (IAM policies, network policies, secrets)

- Any action that affects more than a defined percentage of production capacity simultaneously

- Any action taken during a declared major incident, where the blast radius of a wrong action is already elevated

These aren’t limits imposed by lack of trust in the agent. There are limits imposed by the nature of the actions themselves. The cost of a wrong autonomous action in these categories is high enough that human judgment in the loop is worth the latency.

The Bottom Line

The copilot-to-autopilot question doesn’t have a universal answer. It has a framework: assess reversibility and blast radius for every action, build confidence levels into the agent’s decision-making, design approval gates that actually work, track the agent’s judgment empirically before expanding its autonomy, and maintain hard limits on actions where the cost of a wrong autonomous decision is simply too high.

The teams making the most progress with agentic DevOps are the ones who were most deliberate about the boundaries — and most rigorous about earning the right to expand them.

Autonomy is a consequence of demonstrated reliability, not a starting assumption.