Harness today added an ability to automatically secure code as it is being written by an artificial intelligence (AI) coding tool in addition to adding a module to its DevOps platform that discovers, tests, and protects AI components within applications.

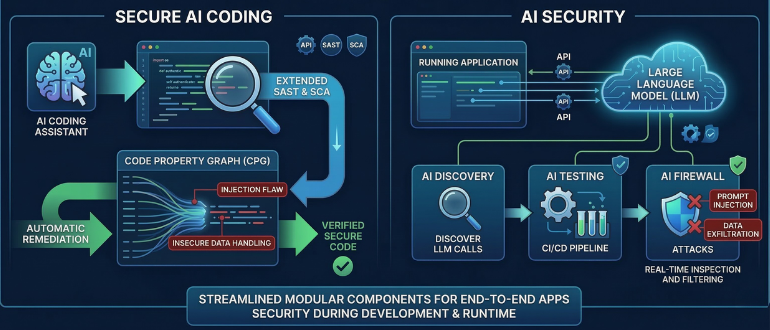

Secure AI Coding is an extension of the static application security testing (SAST) and software composition analysis (SCA) capabilities that Harness already provides. Additionally, Secure AI Coding leverages a Code Property Graph (CPG) developed by Harness to trace how data flows through the entire application to surface complex vulnerabilities such as injection flaws and insecure data handling.

The AI Security module, meanwhile, discovers every call to a large language model (LLM), Model Context Protocol (MCP) server or AI agent that is being made over an application programming interface (API).

At the same time, Harness today also revealed it has partnered with Wipro Ltd. to help organizations accelerate AI-native software delivery.

Rahul Sood, general manager for Harness, said the AI Security module is an extension of the API security capabilities that Harness already provides. The overall goal is to make it simpler to address application security issues both as developers write code and in a runtime environment, he added.

The AI Security module itself will have multiple components, starting with an AI Discovery tool that is now generally available. Harness has also developed an AI Testing tool that are purpose-built to discover threats to models in a way that is integrated within a continuous integration/continuous development (CI/CD) platform and an AI Firewall that inspects and filters LLM inputs and outputs in real time to block, for example, prompt injection attacks that could lead to data exfiltration. Both of those components are available in beta.

A recent Harness survey suggests application security issues are coming to a head in the age of AI. Two-thirds of respondents (66%) said they are flying blind when it comes to securing AI-native apps, with 63% reporting they believe AI-native applications are more vulnerable than traditional IT applications. Nearly half (48%) of security and engineering leaders are concerned about the vulnerabilities that come with it.

It’s not clear to what degree the rise of AI coding might force organizations to reevaluate the tools and platforms they are relying on today to manage DevOps workflows. Harness is betting that in many cases DevOps teams will soon look to streamline DevOps workflows using a set of modular components that can be mixed and matched as needed versus requiring them to standardize on one specific integrated platform. That approach enables DevOps teams to add capabilities over time as they eventually modernize software engineering workflows.

Ultimately, the best case scenario is to trigger scans as code is developed in a way that enables them to be automatically remediated, said Sood. However, in the event additional issues arise after code has been checked in, additional scans can be run both before and after an application is deployed, he noted.

The challenge, as always, is encouraging everyone involved in developing applications to follow the guidance being surfaced by AI tools that are designed to make up for the shortcomings of AI coding tools that many developers may have put too much faith in.